Author

Incresco

AI & Product Strategy Team

Subject Matter Expert

Content reviewed by Incresco's AI & product specialists.

Published

2026-05-14

August 2, 2026 is the date most European technology leaders have circled on their calendar. It is the day the EU AI Act becomes fully enforceable for high-risk AI systems under Annex III. It is the day fines become real, regulators become active, and the gap between what organizations say about their AI compliance and what they can actually prove becomes legally consequential.

And the most important thing to understand about this is: the organizations that are going to be exposed are not the ones that ignored the regulation. They are the ones that treated it as a legal problem and handed it to the wrong team.

The Number That Should Concern Every CTO in Europe

88% of organizations already use AI in at least one business function, according to McKinsey.

Over 50% of those organizations do not have a systematic inventory of the AI systems they have in production.

40% of enterprise AI systems have unclear risk classifications under the EU AI Act, according to an appliedAI study of 106 enterprise deployments.

Put those three numbers together and the picture becomes clear. Most European enterprises are running AI systems they cannot fully account for, against a regulation they have not yet operationalised, with a deadline that does not move.

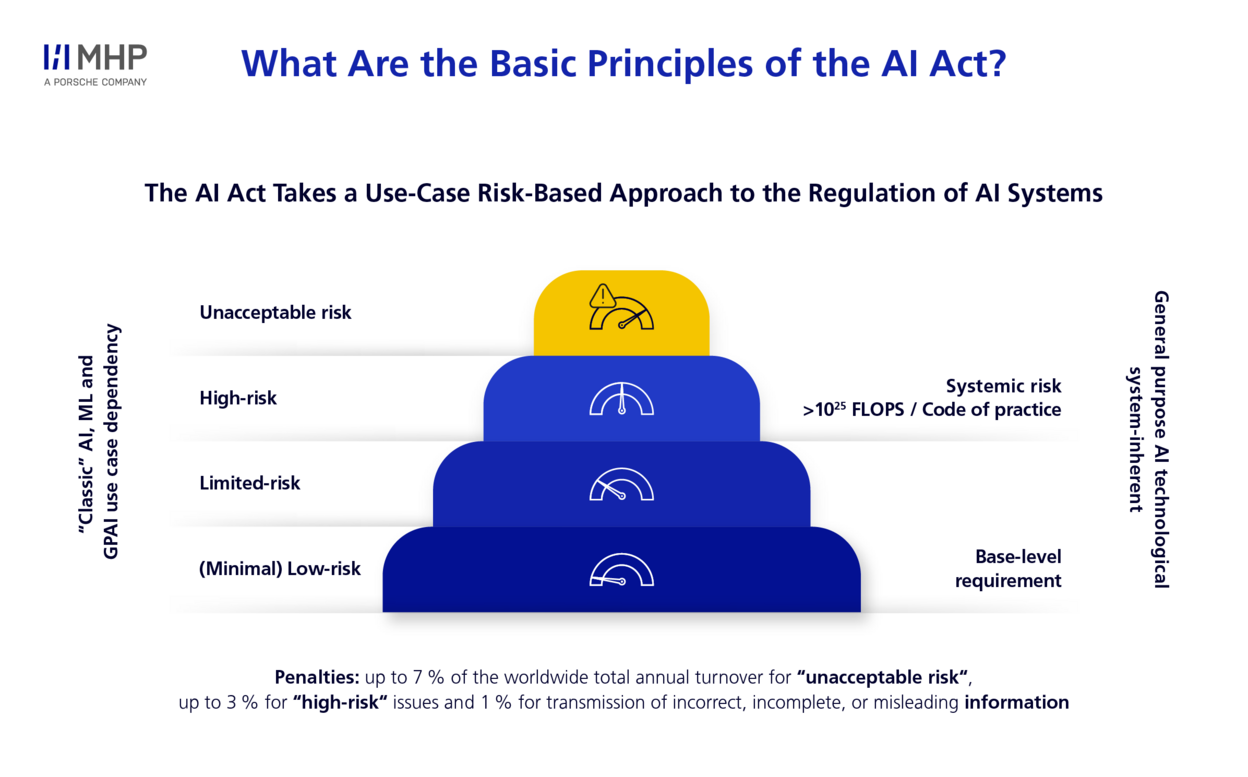

The fine structure makes this urgent at board level. Non-compliance with high-risk AI obligations carries penalties of up to €35 million or 7% of global annual turnover, whichever is higher. That exceeds the maximum penalty under GDPR. A large enterprise spending approximately $1 million annually on AI Act compliance programs is making a rational investment. The cost of non-compliance is an order of magnitude larger.

But the fine is not the real risk. The real risk is what the compliance process reveals: that the engineering infrastructure to prove compliance was never built.

Why Most Organizations Are in the Wrong Room

Walk into most European enterprises right now and ask who is leading EU AI Act compliance. The answer is almost always: the legal team, with support from the compliance function.

That is the wrong answer.

EU AI Act compliance in 2026 is a product, engineering, and procurement problem first. The work is concrete: building logging infrastructure that produces audit-grade evidence, designing human oversight mechanisms that work at the architecture level, and integrating governance gates into development workflows.

None of that sits in a policy document. None of it is delivered by a legal memo. All of it requires engineering work that most organizations have not started.

The legal team can tell you what Article 26 requires. They cannot build the logging infrastructure that satisfies it. The compliance team can write the policy on human oversight. They cannot design the architectural mechanisms that make it real. The CTO can. The VP of Engineering can. The engineering organization can.

This is a CTO conversation that is happening in the wrong room.

What Is the EU AI Act?

The EU AI Act is the world’s first comprehensive legal framework for artificial intelligence. Adopted by the European Parliament in May 2024 and entering into force on August 1, 2024, it establishes binding rules for how AI systems are developed, deployed, and used across the European Union.

It applies to any organization, anywhere in the world, whose AI systems produce outputs that affect people inside the EU. A company headquartered in London, Singapore, or New York that uses an AI hiring tool to screen candidates in Germany is in scope. A US fintech whose credit scoring model makes decisions affecting French customers is in scope. Geography of the provider does not determine applicability. Geography of the impact does.

The regulation was built around a single organizing principle: the higher the risk an AI system poses to people, the stricter the rules governing it. This produced the four-tier risk framework that determines every compliance obligation.

Think of it as the GDPR for AI, but with a broader operational reach. Where GDPR governed what organizations did with personal data, the EU AI Act governs what organizations do with AI systems that affect people’s lives. Employment decisions. Credit access. Educational assessment. Law enforcement. These are the domains where the regulation has its sharpest teeth.

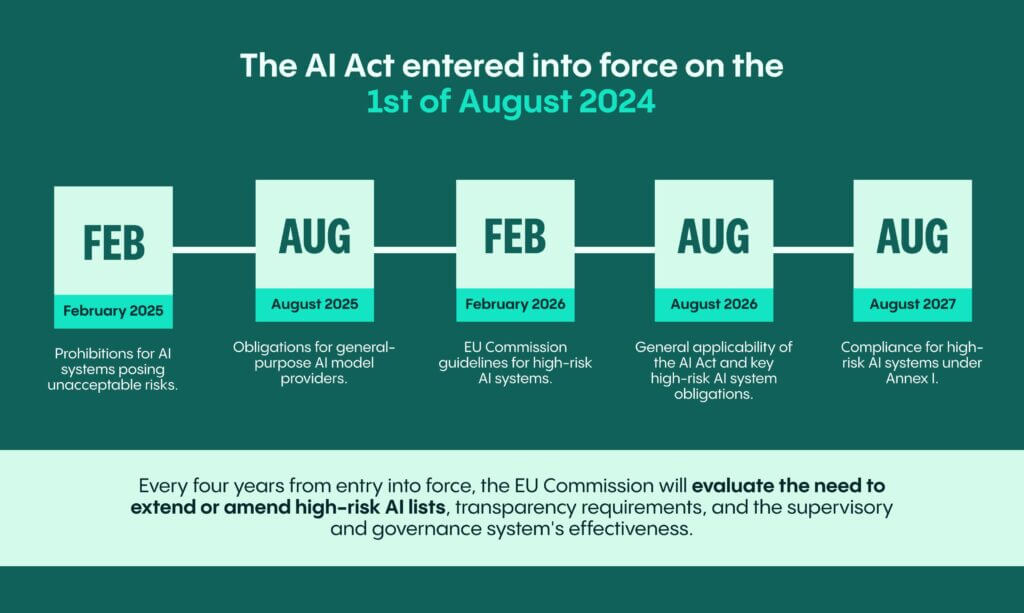

Three enforcement phases have already begun. Prohibitions on the most dangerous AI practices took effect in February 2025. Obligations for providers of general-purpose AI models began in August 2025. August 2, 2026 is the third and most significant threshold, when full compliance requirements for high-risk AI systems under Annex III become enforceable.

Unlike a directive that requires each EU member state to pass its own law, the AI Act is a regulation with direct legal effect across all 27 member states simultaneously. The same obligations apply in Berlin, Amsterdam, Paris, Stockholm, and Warsaw on the same day.

Finland became the first member state with fully operational AI Act enforcement powers in January 2026. Other national competent authorities are activating throughout the first half of 2026. Enforcement is not theoretical. It is operational.

The EU AI Act does not replace GDPR. It sits alongside it. High-risk AI systems that process personal data trigger obligations under both frameworks simultaneously. Article 27 of the AI Act requires a Fundamental Rights Impact Assessment. Article 35 of GDPR requires a Data Protection Impact Assessment. The most efficient path integrates these into a single unified process rather than running parallel compliance exercises that duplicate effort and create inconsistencies.

What the EU AI Act Actually Requires From Your Engineering Team

The regulation establishes four tiers of risk. Understanding where your AI systems sit in that framework is the first engineering task, not a legal one.

Unacceptable risk systems are banned outright. Social scoring by governments, real-time biometric identification in public spaces for law enforcement, and AI systems that exploit vulnerable groups have been prohibited since February 2025.

High-risk systems under Annex III are the category that hits most enterprises. These include AI used in hiring and recruitment, credit scoring and lending decisions, biometric identification, critical infrastructure management, educational assessment, and law enforcement tools. If your organization operates AI in any of these domains and those outputs touch EU residents, you are in scope.

Limited risk systems face transparency obligations under Article 50. Users interacting with AI chatbots must be informed they are dealing with an AI system. AI-generated content must be labeled.

Minimal risk systems face no specific obligations under the Act.

The classification challenge is real. 40% of enterprise AI systems have ambiguous risk classifications, primarily in critical infrastructure, employment, law enforcement, and product safety areas. Borderline cases require documented classification rationale, not assumptions.

Once your systems are classified, the engineering obligations for high-risk systems are specific and non-negotiable.

- Article 9 requires documented, ongoing risk management systems. Not a one-time assessment. An ongoing system.

- Article 10 requires training data governance. Data lineage, quality standards, bias testing documentation.

- Articles 11 and Annex IV require complete technical documentation covering the system’s intended purpose, design decisions, training data characteristics, and performance metrics.

- Article 12 requires automatic logging of system events. Not manual records. Automated, continuous, audit-grade logs.

- Articles 13 and 14 require transparency obligations to deployers and affected persons, including human oversight mechanisms that function at the architectural level.

- Article 26 defines deployer obligations specifically. This is the article that catches most organizations unprepared. Vendor documentation does not satisfy Article 26. You must demonstrate how each high-risk AI system is used inside your own operating environment. That requires internal records, monitoring processes, and escalation procedures that your team built and owns.

The Question Most Compliance Teams Cannot Answer

Ask your compliance team this question today:

“Can we produce an internal record showing how each high-risk AI system is used inside our organization?”

The answer is usually no. Not because people are careless, but because they assumed vendor documentation would cover the deployer’s own processes. A provider can share instructions for use, system documentation, and testing information. The deployer still has to evidence what happened inside its own operating environment.

The inventory gap is where most enterprises are most exposed. When asked to name every AI system in use, most organizations identify three or four obvious systems. Then the HR team mentions a screening tool running in pilot. The legal team mentions a contract classification system. Finance mentions an anomaly detection model in their fraud stack. Each one is a potential Annex III system with compliance obligations nobody has mapped.

More than half of organizations lack a systematic register of what AI systems are in production or development. Before you can classify risk, you need to know what you have. Every AI tool, model, and automated decision system needs to be catalogued, including those procured from third parties and embedded in enterprise SaaS platforms.

This is where compliance fails. Not in the policy. In the inventory.

The Shadow AI Problem Nobody Is Accounting For

There is a second exposure that sits underneath the formal compliance conversation.

64% of workers bypass corporate security with personal logins and unauthorized AI tools, according to industry analysis. Under the EU AI Act, that is not an IT problem. It is a regulatory exposure.

If an employee uses an unauthorized AI tool to make a decision that affects an EU resident, and that tool falls within an Annex III category, the organization may be liable regardless of whether the tool was sanctioned internally. The Act does not distinguish between approved and shadow AI when assigning responsibility for outputs that affect people.

This makes the compliance perimeter significantly wider than most organizations have drawn it. It also makes the governance conversation a cultural and organizational question, not just a technical one.

What Demonstrable Compliance Actually Requires

The distinction that matters most heading into August 2026 is between claiming compliance and demonstrating it.

Claiming compliance: A policy document exists. The legal team has reviewed it. The compliance team signs off quarterly.

Demonstrating compliance: A regulator walks into your organization and asks to see how your hiring AI system makes decisions, how those decisions are logged, who has oversight authority, and what happens when the system produces an anomalous output. You can show them. In real time. With documentation that was generated automatically, not assembled retroactively.

The engineering work that creates demonstrable compliance has five components.

- AI system inventory. Every AI system in production, in development, and in procurement pipelines catalogued and classified against the Annex III risk categories. This is a living document, not a one-time audit.

- Audit-grade logging infrastructure. Automatic event logging for every high-risk AI system, structured to satisfy Article 12. Not application logs that happen to capture AI events. Purpose-built logging that produces the evidence format regulators will expect.

- Human oversight architecture. Oversight mechanisms designed at the system architecture level, not bolted on after deployment. This means defined escalation paths, intervention capabilities, and documented decision authority built into the workflow, not described in a policy.

- Technical documentation under Annex IV. Complete documentation covering intended purpose, design decisions, training data characteristics, performance metrics, and known limitations. Maintained continuously, not produced at point of audit.

- Governance gates in development workflows. Compliance checks embedded in the development and deployment process itself, so new AI systems cannot reach production without satisfying the classification and documentation requirements.

None of this is produced by a legal review. All of it requires engineering decisions, engineering infrastructure, and engineering ownership.

The Cost of Waiting

The AI governance platform market, valued at approximately $340 million in 2025, is projected to grow at a compound annual growth rate above 28% through the end of the decade. Gartner projects that effective governance technologies could reduce regulatory compliance costs by 20%.

Large enterprises are spending an estimated $8 to $15 million on compliance programs for high-risk AI systems. That investment, made early and structured correctly, builds infrastructure that serves the organization beyond the August 2026 deadline. The same documentation, logging, and oversight mechanisms that satisfy the EU AI Act also make AI systems more reliable, more auditable, and more trustworthy to enterprise customers.

The organizations that treat this as a compliance burden are spending money to satisfy a regulator. The organizations that treat it as an engineering standard are building infrastructure that compounds in value.

There is also a market differentiation advantage that has received less attention than it deserves. Enterprises that build governed, documented, auditable AI systems are the ones that will win enterprise contracts in regulated industries, secure procurement approvals in public sector deals, and earn the trust of customers increasingly sensitised to how AI affects their lives.

The August 2026 deadline converts responsible AI practices into legal requirements. But organizations that have been doing this work already are about to gain a meaningful competitive advantage over those that have not.

What the Best-Prepared Organizations Are Doing Right Now

The technology leaders navigating this well share a consistent pattern. It is not about budget. It is about who owns the problem.

In the organizations moving fastest, the CTO or VP of Engineering has taken direct ownership of the compliance engineering work. Not delegated it to legal with engineering support. Owned it, with legal as an advisor.

The engineering team has completed an AI system inventory that goes beyond the obvious enterprise systems into the departmental tools, the pilot programs, and the third-party integrations that sit inside SaaS platforms already in use.

The development workflow has been updated to include compliance gates. New AI systems require classification documentation before they reach staging environments. Annex IV documentation is a delivery requirement, not a retrospective exercise.

And the logging infrastructure is being built now, not in July.

The Incresco Approach

At Incresco, the work we do with European technology teams on EU AI Act compliance starts in the same place every time.

Not with a policy review. Not with a legal assessment. With an AI system inventory.

We map what is running, what is in development, and what is embedded in third-party systems. We classify each system against the Annex III risk categories and document the classification rationale. We identify where the Article 26 gaps are, specifically where internal usage records do not exist or where vendor documentation is being relied upon to cover deployer obligations.

From there, the engineering work is specific and sequenced. Logging infrastructure before documentation. Oversight architecture before governance policy. Inventory before everything.

The organizations we work with are not the ones that missed the regulation. They are the ones that understood early that compliance is an engineering problem and wanted a partner who could build the infrastructure, not just describe it.

If your AI systems are in production and Annex III mapping has not started, that conversation is already overdue.

The Timeline You Need to Operate Against

February 2, 2025: Prohibitions on unacceptable-risk AI practices became enforceable. If your organization has not already addressed this, the exposure is live now.

August 2, 2025: Obligations for general-purpose AI model providers began. If you are integrating foundation models into your products, you are in scope.

August 2, 2026: Full compliance requirements for Annex III high-risk AI systems become enforceable. Penalties activate. National competent authorities begin enforcement.

December 2, 2027: Potential backstop for certain high-risk systems if the European Commission’s Digital Omnibus package is formally adopted. This is a conditional delay under negotiation, not current law. Compliance experts uniformly advise treating August 2026 as the binding date.

The engineering work takes longer than most teams expect. The inventory alone typically surfaces systems that require months of documentation work before they can satisfy Annex IV requirements.

The organizations that start this week will be demonstrating compliance in August. The ones that wait for legal clarity on the Digital Omnibus will be explaining their readiness plan to a regulator.

Start With One Question

If you take nothing else from this piece, take this.

Ask your compliance team, your CTO, and your VP of Engineering to answer one question together this week:

“Can we demonstrate — not claim — how each high-risk AI system is being used inside our organization right now?”

The answer to that question tells you where you are.

If the answer is yes, with documentation, with logging infrastructure, with human oversight architecture that a regulator could inspect: you are in the second group. The group that has figured out that this is an engineering problem.

If the answer is no, or if the room goes quiet: you know what the next 80 days need to look like.

Incresco is working with engineering teams across Germany, the Netherlands, France, and the Nordics on exactly this work. Not policy. Not legal review. The engineering infrastructure that makes August 2, 2026 a date your organization is ready for rather than one it is scrambling toward.

If this is the conversation you need to have, we are ready to have it.

Book a 30-minute working session with Incresco

Incresco is a technology consulting firm working with global enterprises on AI engineering, production readiness, and regulatory compliance. This piece was written in May 2026 and reflects the regulatory position as of that date.

Related Articles

Explore more perspectives on AI strategy, product development, and engineering.

2023-02-26

Animating the Web with Lottie: Best Practices for Optimization

The article suggests using the useEffect hook to control animation rendering, the useMemo hook to optimize rendering, and the useLazyLottie hook to lazy-load animati...

2017-05-30

Creating a simple Chatbot in Salesforce lightning using API.AI in less than 60 mins

In this article, I have explained on how to build your own Chatbot in API.AI and use it in Salesforce lightning.

2023-04-05

Delegation & Empowerment: Balancing Leadership for Team Success

Unlock your team's potential with effective delegation strategies. Balance leadership and autonomy, overcome challenges, and foster a thriving work environment for g...

Ready to Turn Ideas into a Roadmap?

If this article sparked opportunities for your product or organisation, let's turn them into a concrete plan with timelines and outcomes.

Schedule a Consultation